When implementing Delta Sharing here are some best practises to keep in mind.Documentation Index

Fetch the complete documentation index at: https://developer.voyado.com/llms.txt

Use this file to discover all available pages before exploring further.

- Keep shares small and purposeful: one share per product, avoid “kitchen sink” shares

- Schema contracts: version breaking changes (e.g., dp_orders_v2)

- Select only columns you need; apply filters for predicate pushdown

- For Power BI, mind the row limit behavior in Power Query

- Incremental loads: prioritise CDF over re-reading entire tables

- Security: favor OIDC federation over long-lived bearer tokens where possible (better rotation, MFA)

- Auditing: one recipient per share simplifies revocation and tracking

Connecting to a share

Activation (open sharing):- Provider creates a recipient (open sharing) and gets the activation link

- The recipient opens the link to download the credentials file (.SHARE) or completes OIDC setup

- The recipient opens the .SHARE credentials file, a JSON with the structure below

- They use the credentials to connect from Spark, pandas, Power BI, or Tableau

Example: Apache Spark (PySpark)

Example: Apache Spark (PySpark)

Example: pandas (Python)

Example: pandas (Python)

Example: Power BI / Power Query (Desktop)

Example: Power BI / Power Query (Desktop)

- Get Data → Delta Sharing

- Paste in Delta Sharing Server URL and Bearer token from the credential file (or use OIDC if configured).

- Choose your table or tables and load (Import).

Example: Tableau

Example: Tableau

- Install “Delta Sharing by Databricks” from Tableau Exchange.

- In Tableau: Connect → Delta Sharing by Databricks → either upload the .share file or enter Endpoint URL and Bearer Token.

Example: Java

Example: Java

Retention and history

Delta table history (metadata) typically is retained for 30 days. Older versions may be removed and time-travel reads might no longer be possible after retention/VACUUM. Plan to copy or snapshot locally if you need long-term historical access. Delta Sharing is read-only; recipients can always land extracts locally if they need to keep records beyond retention windows.Connector quick links

- Delta Sharing overview (Databricks docs)

- Open-source Delta Sharing repo (protocol, Python & Spark connectors, examples)

- Read shared data with Spark / pandas / Power BI using credential files

- Spark format(“deltasharing”) examples (read, CDF, streaming)

- Power BI / Power Query - Delta Sharing connector

- Tableau - “Delta Sharing by Databricks” connector (Tableau Exchange)

- Delta Sharing Java connector (labs/community)

Troubleshooting

- 401/403 unauthorized: credential expired/revoked, token missing, or OIDC not configured. Regenerate activation link or confirm OIDC.

- CDF is not enabled: request provider to enable history sharing or use full reads.

- Power BI shows limited rows: adjust Power Query row limits and apply filters.

- Historical versions unavailable: likely vacuumed or beyond retention; snapshot/copy locally for long-term needs.

Code snippets

Spark SQL

Spark SQL

Spark CDF window (PySpark)

Spark CDF window (PySpark)

Pandas quick peek

Pandas quick peek

New delete pattern

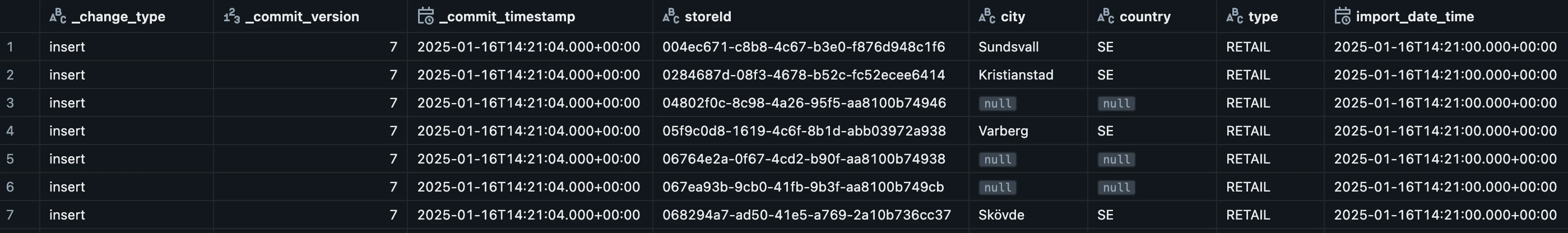

To clarify which data is stored and made available through Delta Share, a new delete pattern has been introduced (as of January 2026). Depending on how consumers of Delta Share have implemented their integrations, this change may require adjustments on their side. Delta Share allows customers to incrementally retrieve changes to their data and store those changes in their own data warehouse or similar storage solution. This is achieved by storing every batch of new or modified records with a commit version tag. Consumers can then download all data associated with a specific commit version. When new or updated data is added to Delta Share, the record receives the value “insert” in the_change_type column.

The column _commit_version indicates which version the record belongs to, and _commit_timestamp shows when the record was added.

_change_type value named “delete”. A delete record will be generated for any row where the import_date_time is older than 30 days.

This record does not modify the original data. All fields remain unchanged. Instead, it serves as a technical log entry indicating that the corresponding row will be removed from Delta Share.

Consumers of Delta Share may choose to ignore these delete records or treat them similarly.

FAQ

Here are some frequently asked questions about this integration.When using “query” to “fetch everything,” what does that mean?

When using “query” to “fetch everything,” what does that mean?

This means you only have access to what’s currently part of the share and not every piece of data stored in Engage.

Is there a time window to consider for “query”?

Is there a time window to consider for “query”?

In “changes” only certain versions are available. Why?

In “changes” only certain versions are available. Why?

- _change_type

- _commit_version

- _commit_timestamp

What determines the versions available in the change feed?

What determines the versions available in the change feed?

What does it mean when a table has no version history?

What does it mean when a table has no version history?

- Change Data Feed (CDF) or version tracking is not enabled for that share, or

- All historical versions have been cleaned up by the retention policy.

Why am I getting 'Bad request — Cannot load table version 0…'?

Why am I getting 'Bad request — Cannot load table version 0…'?